I thought I better post the outcome of a recent experiment using Processing and Tooll3 together to transform incoming MIDI notes and controller information into realtime visual output.

The MIDI and sounds are generated by hardware synths – Roland SH-4D and Elektron Model Cycles.

Processing is used to create and manipulate animated geometric shapes that correspond to notes produced by individual synth tracks, building on previous work undertaken in p5.js. After significant stress testing, it turned out that Java-based Processing is slightly better suited to this purpose than JavaScript-based p5.js, e.g., in relation to response time and overall stability.

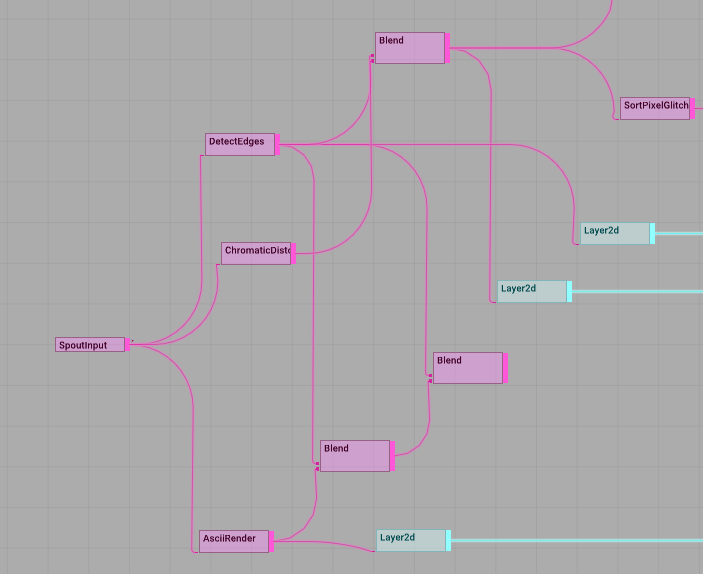

Processing forwards the live image using the Spout library to Tooll3, a free VJ platform, which is used to add effects. Tooll3 has amazing visual capabilities although it suffers from a somewhat limited MIDI implementation and is currently PC-only.

Check the results out below.

If you’re interested in more detail, read on.

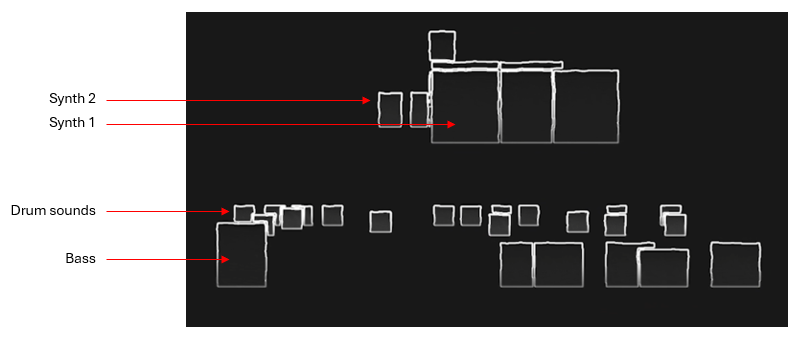

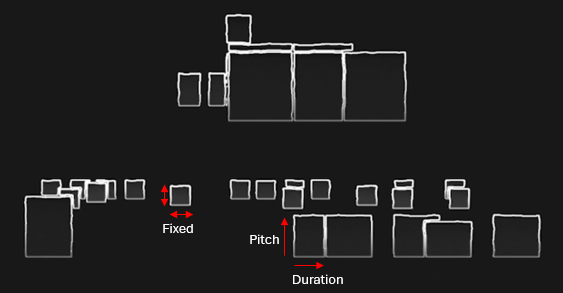

Processing is used to create block shapes for each MIDI note. Each instrument is assigned to a unique MIDI channel and this distinction is used to create a separate line of shapes for each instrument.

Drum shapes (kick drum, snare etc.) have fixed dimensions, all other shapes are influenced by MIDI note duration and pitch.

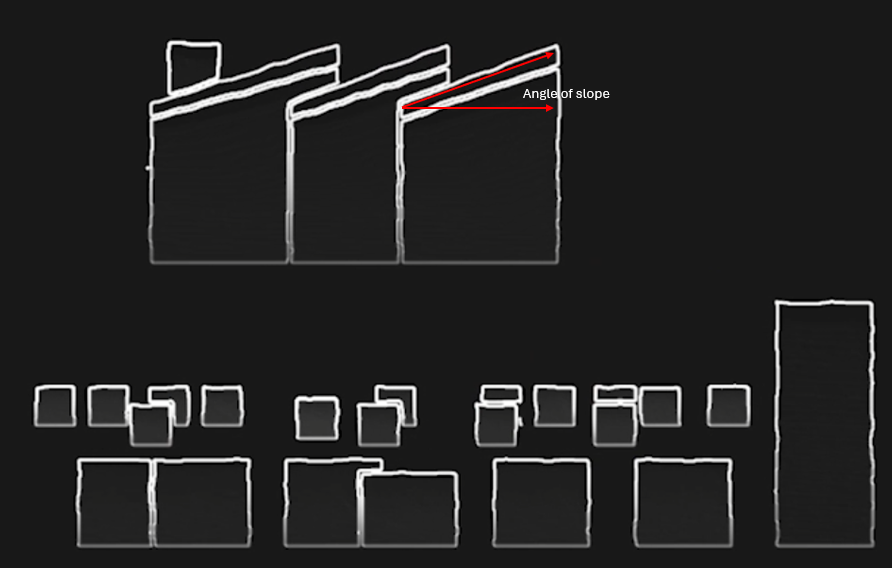

The slope of a note shape is controlled by MIDI controller information, for Synth 1 this relates to the frequency cutoff of the sound.

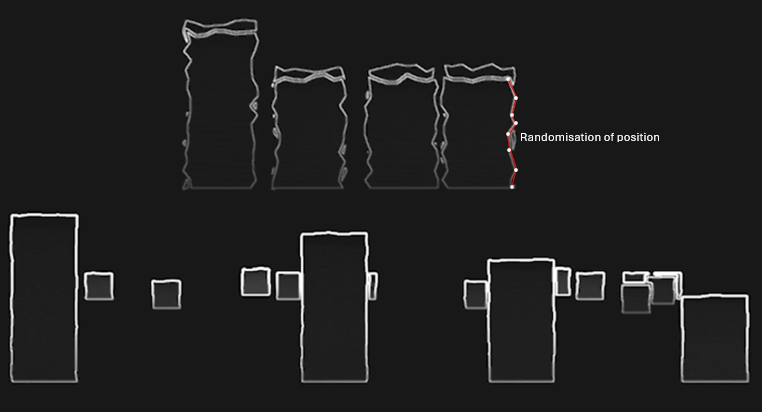

Each note shape has many additional vertices along each side which can be displaced using random values to create a wobbling effect. In this case, Synth 1 shapes wobble in response to MIDI pitch modulation data – e.g., the classic 80’s synth pitch modulation.

Processing is also used to colour the shapes, this is triggered either manually using a MIDI controller or via non-rendering MIDI information. Essentially, I used one of the Elektron sequencer channels to control visual changes matching the current phrase. The MIDI data from this channel did not itself produce shapes.

All the shapes are drawn in 2D with a scale factor derived from notional z axis values using the following simple perspective calculation where fl = represents focal length.

scale = fl / (fl + z)

I had considered that I might change perspective values on the fly but in the end didn’t pursue this.

Processing is great for handling MIDI and drawing shapes but not so good for post-processing which is where an application like Tooll3 comes in handy. It was straightforward enough to pipe the fullscreen Processing image through to Tooll3 using Spout and then apply various image filters as shown below.

The MIDI visual control track was used to trigger and switch between these various effects. I didn’t get on very well with the MIDI implementation offered by Tooll3 but it is possible this has since improved.

This was a fun project to work on and Tooll3 is quite inspiring, although it doesn’t have anywhere near the level of community support that TouchDesigner does. I’m planning to investigate the latter more thoroughly as a realtime AV platform some time soon.