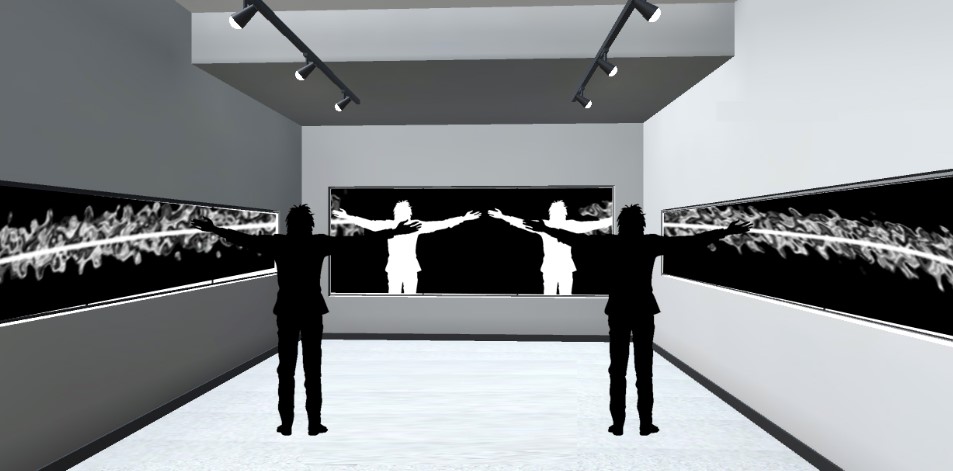

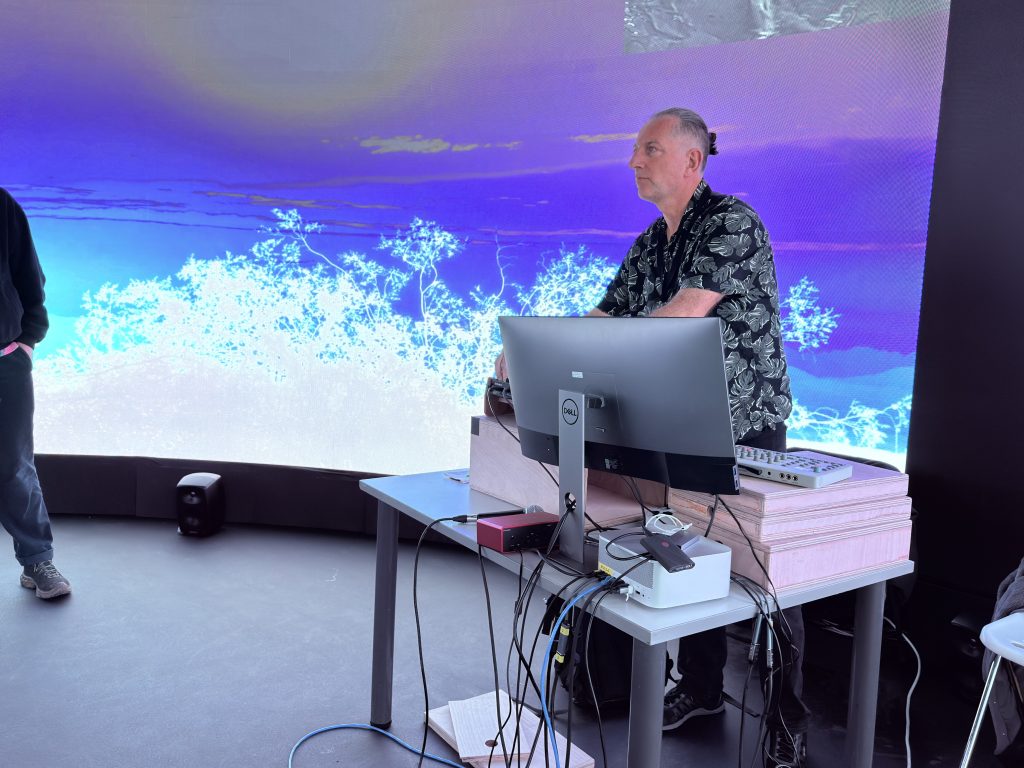

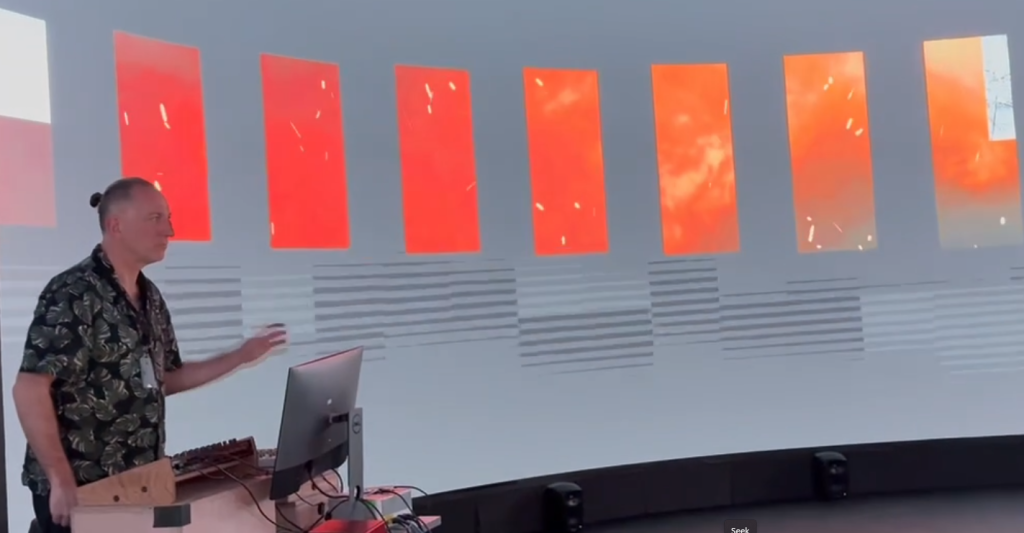

On March 18th 2026 I performed an audiovisual work which, although unnamed and effectively still work in progress, is the product of research and development over the previous 6 months or so. The performance took place within the 10-metre diameter, 4-metre high, circular LED screen at Norwich University of the Arts’ Immersive Visualisation and Simulation Lab, as part of the Collusion-led Art Tech Play programme.

Inspired by the classic quote from the Bhagavad Gita, Chapter 11, Verse 32, variously translated.

“I am Time, the mighty cause of world destruction, Who has come forth to annihilate the worlds.”

Translated by Winthrop Sergeant (1984)

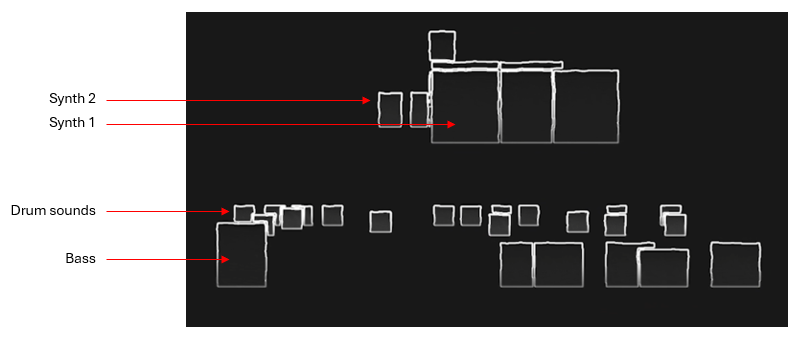

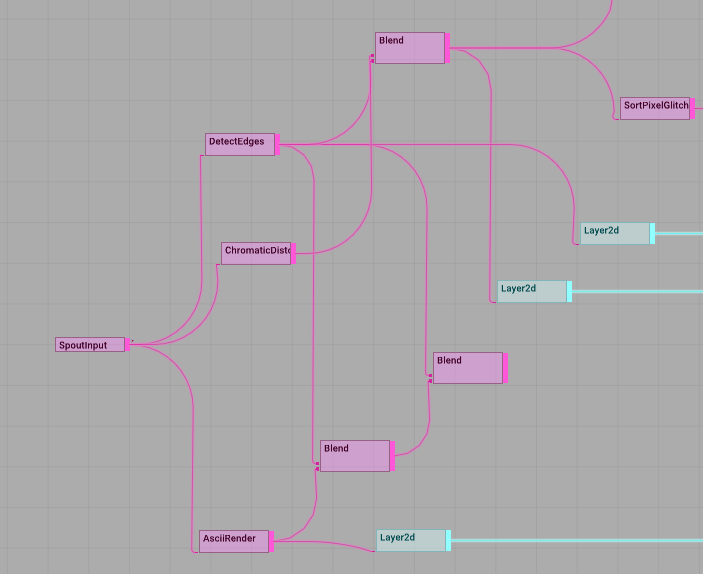

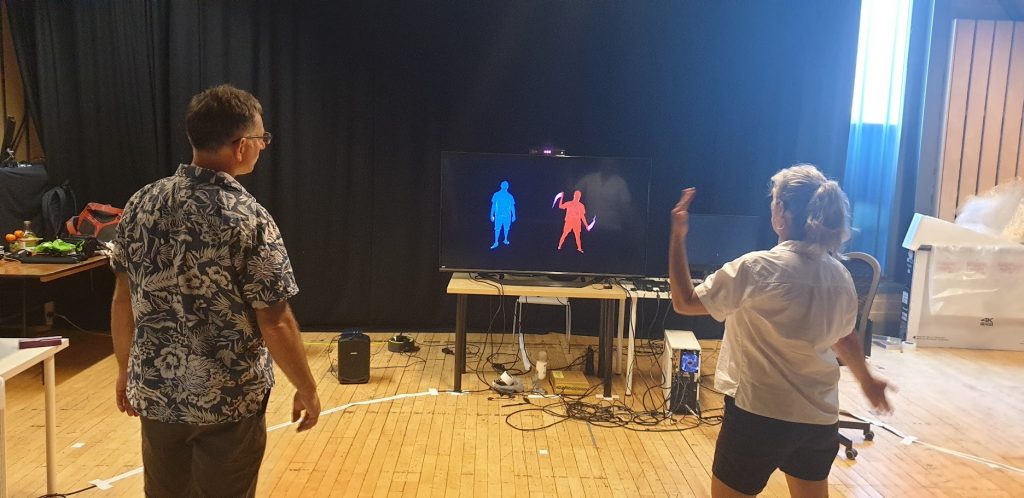

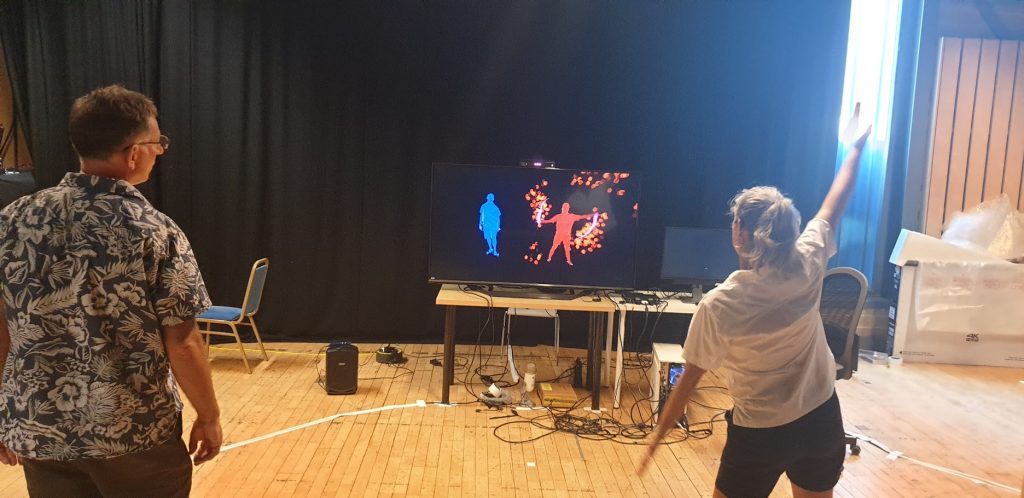

The work explores themes of destruction, natural resilience, and human responsibility (or lack of it) and uses TouchDesigner to create visual outcomes that respond in real time to performed electronic music, using both instrument MIDI data and audio data as inputs. Although I have been experimenting with audiovisual performance for some time, this work is somewhat new territory for me as the content is largely videographic.

It is easier than ever to collect libraries of videos, many offered free by individual videographers. Some are highly composed, others more naturalistic. Increased ownership of high quality video cameras and the proliferation of drones are both leading to greater numbers of ‘citizen videographers’ producing compelling video content, for example https://www.pexels.com/.

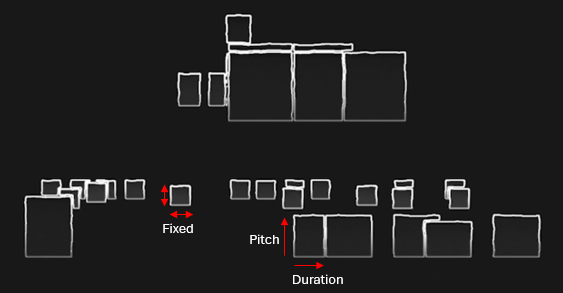

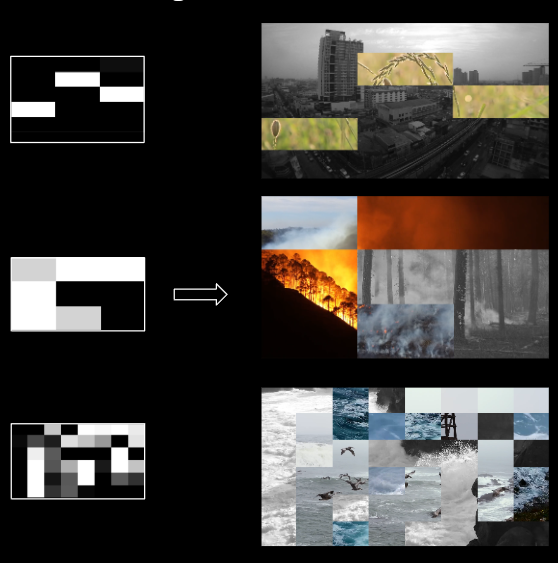

The principal mechanic of the work is a noise grid used to mix different elements of video that change according to a weighting of the following factors:

- Random/Noise

- Musical time (i.e. changing every 2 bars)

- Response to specific instrument data (e.g. a kick drum)

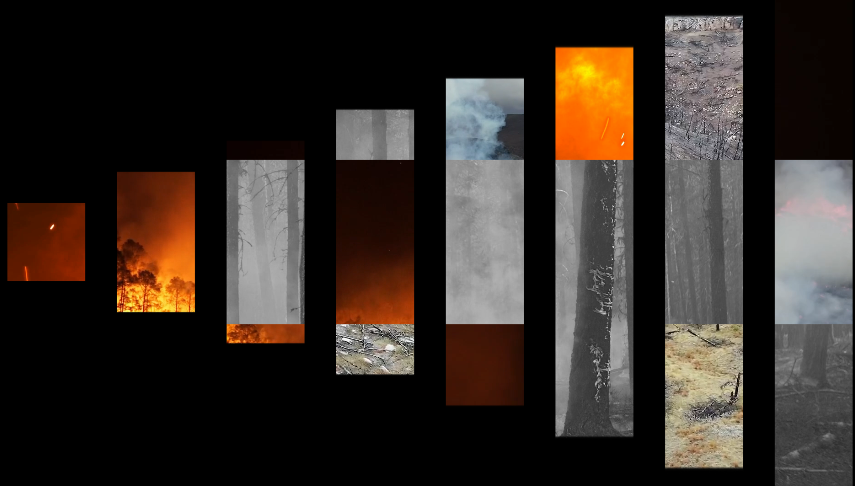

The graphic below demonstrates the relationship between the noise grid and resulting video collage.

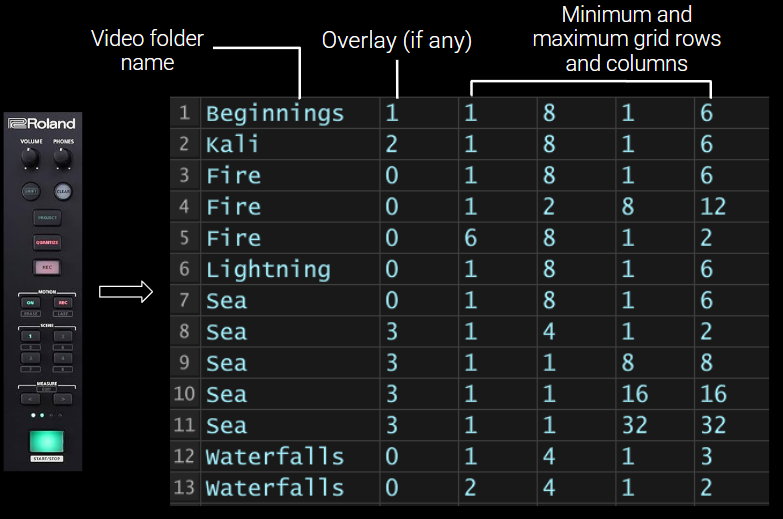

Content is organised into ‘scenes’ which map directly to scene buttons on the Roland MC-707 hardware used to drive the sound. Each scene has a number of parameters including:

- A folder of video files to feed into the grid

- A possible overlay layer to show on top of the grid

- Parameters that relate to the randomisation of video grid divisions (i.e. a minimum and maximum number of columns and rows)

This graphic below demonstartes the relationship between the hardware scene buttons and the scene data stored in TouchDesigner.

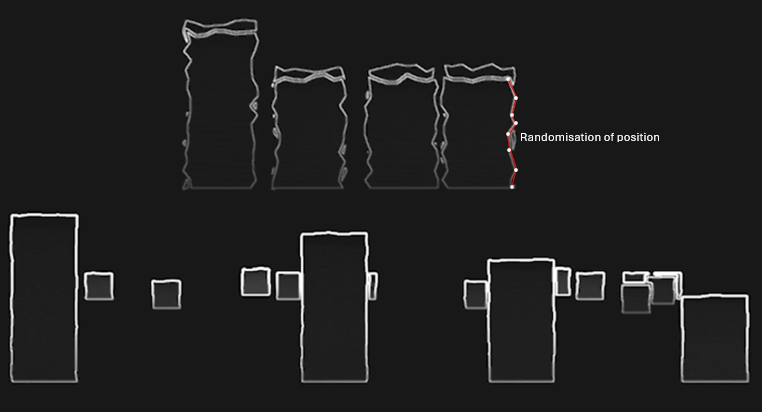

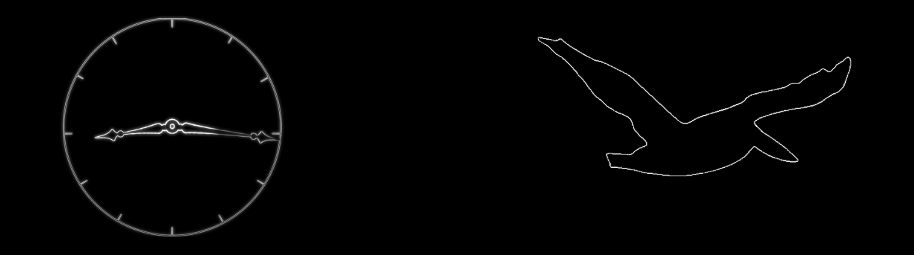

For some scenes, a monochrome overlay is shown on top of the video grid.

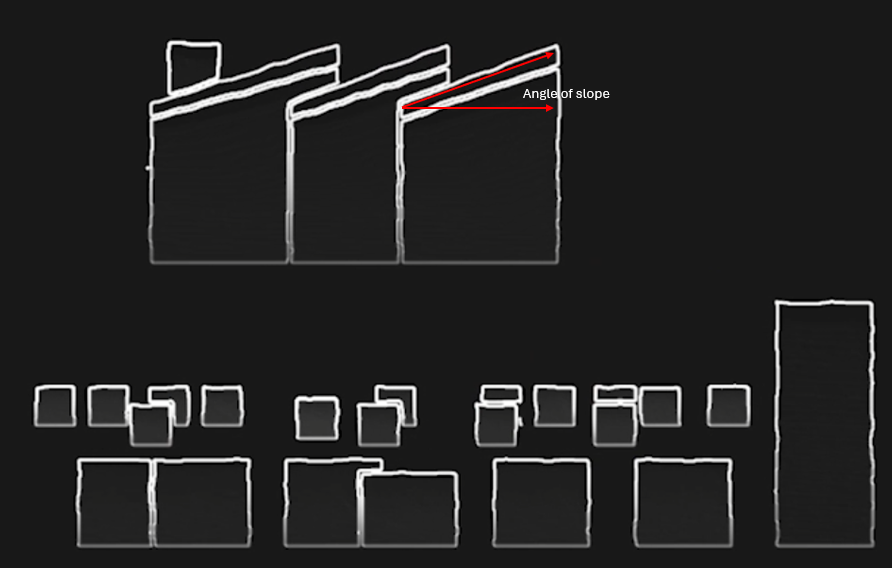

Incidental performative effects are mapped to audio parameters. For example, modulating the release of the snare envelope (how long or short the drum sounds) results in the video grid cells rotating to the left or right.

A classic perfomance effect within many electronic music genres is ‘beat repeat’ where a small sample of audio is repeated in time with the music. By using MIDI information to trigger audio beat repeats, a visual equivalent can also be triggered. In the case of a 16th note (semiquaver) beat repeat, a visual equivalent builds from left to right every semiquaver. Other repeat intervals are represented similarly, e.g., quaver, triplet etc.

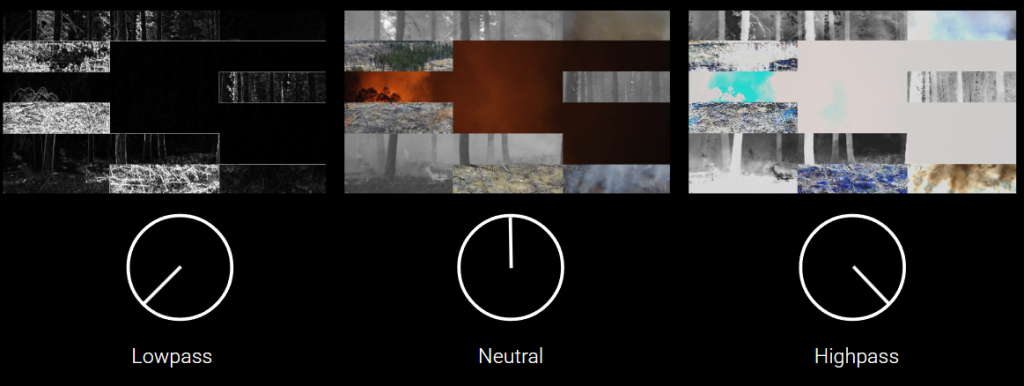

A master audio sweep filter, another common electronic music performance effect, is aligned with post visual filters i.e. final stages of the visual composition process. A black and white edge detection image is used for audio lowpass, high-brightness ‘blow out’ used for audio highpass.

The work was adapted for the 360 screen relatively quickly, so it was very much a test. However, I did have chance to perform the piece twice, each time for around 15-20 minutes which gave me a good opportunity to test out the performance mappings.

Although I don’t have a decent video capture of the event, fellow artist @oculardelusion kindly posted a couple of videos on Instagram – https://www.instagram.com/p/DWFMEk8AqM9/

Feedback from the night was generally positive although I didn’t get chance to talk to that many people in depth as I was busy performing and also co-organising the night! One interesting comment I received was that it was notable and possibly unusual for an av performer to be looking at the large screen rather than the computer screen during a performance. In fact the computer screen had nothing on it! But more importantly, this felt like the right thing to do in a large scale improvised perormance situation.

The research leads me to consider a number of themes for potential future exploration.

Theme #1: Mapping and Translation Strategies

How do different MIDI-to-visual mapping approaches affect both performer agency and audience perception? E.g., exploration of one-to-one mappings versus many-to-many relationships, examining when literal translation (note-to-shape) versus abstracted relationships work better, potentially developing a framework for “meaningful” versus “arbitrary” mappings based on practical investigation.

Theme #2: Collage Semantics & Meaning Making

What happens when found footage collage meets electronica’s functional dance context? E.g., whether narrative fragments emerge from triggering choices, investigate how juxtaposition of source materials creates meaning (or deliberately avoids it), as well as examining the politics of source material selection – whose images, from what contexts, and how does recontextualization operate?

Theme #3: Dramaturgical Structure

Electronica has inherited DJ culture’s arc of tension/release across a set. How do visuals participate in this dramaturgical structure? E.g., a taxonomy of visual intensities that parallel musical energy (breakdowns, drops, rollers) might be developed, and/or investigate whether visuals can work against the music’s energy to create productive friction.